Flowgear Developer tools

With our developer tool you create, edit and manage enterprise-grade integrations for your business, your customers and your suppliers -in minutes not months.

What we do for developers

Using Flowgear’s scalable platform, developers and non-technical users can easily create, edit and manage enterprise-grade integrations for your business, your customers and your suppliers — in minutes not months.

Take advantage of our intuitive code free, drag and drop visual designer which includes a comprehensive library of prebuilt connectors, templates and advanced developer tools.

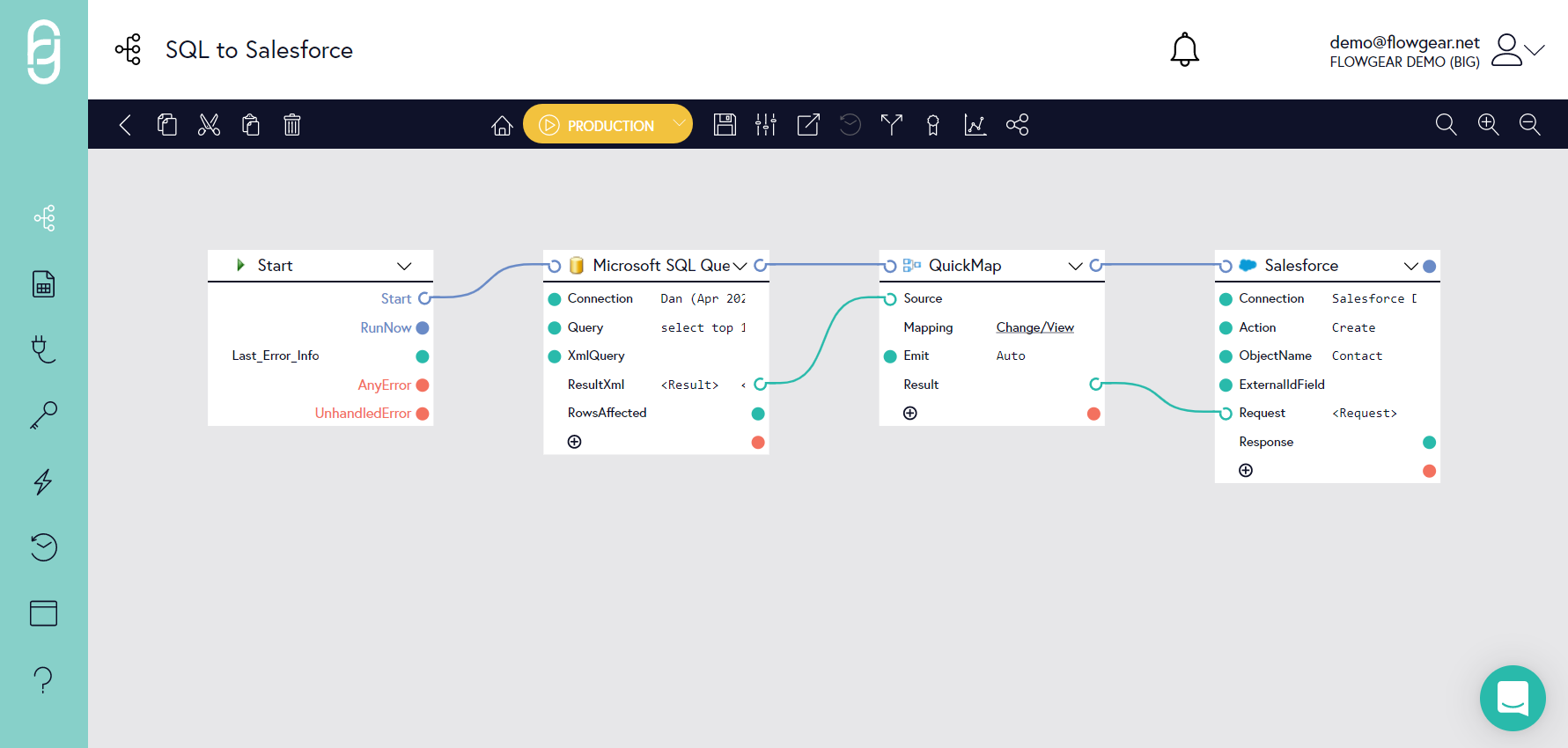

Design console

Drag and drop design console

Benefits

- Create complex integration workflows using a rich visual design console

- Start creating integration processes immediately

- For end-users, Workflows to be run on-demand or enabled for AutoStart. Selected high-level activity information is also presented to end-users.

Provides agile integration by coupling design and execution, giving integrators the benefit of much shorter test cycles, and enables an iterative development approach.

Pre-built integrations

Benefits

- Enable rapid implementation cycles: Flowgear Connectors and reusable design components offer cost-effective and

- Easily maintained integration solutions, instead of one-off, custom integrations that are expensive to implement and difficult to maintain

Use one of our pre-built integrations or customize to your specific requirements

- Product Connectors cover popular enterprise apps for ERP, CRM, e-commerce, and others

- Technology Connectors cover standards & protocols (databases, file structures)

- Standard protocols (REST, SOAP)

- Build and manage APIs. Read how Flowgear works with APIs.

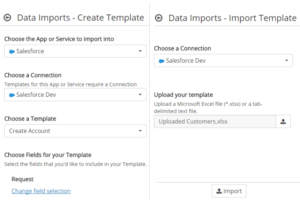

Import data

Files types supported

- Microsoft Excel spreadsheets (.xlsx)

- Flat files (tab-delimited)

Applications supported

Import into:

- Most Flowgear Connectors

- Sage One

- Sage Evolution

- SYSPRO

- Microsoft SQL Server

Console interface

- No coding required

- Accessible by non-technical users

Import data into SQL Server

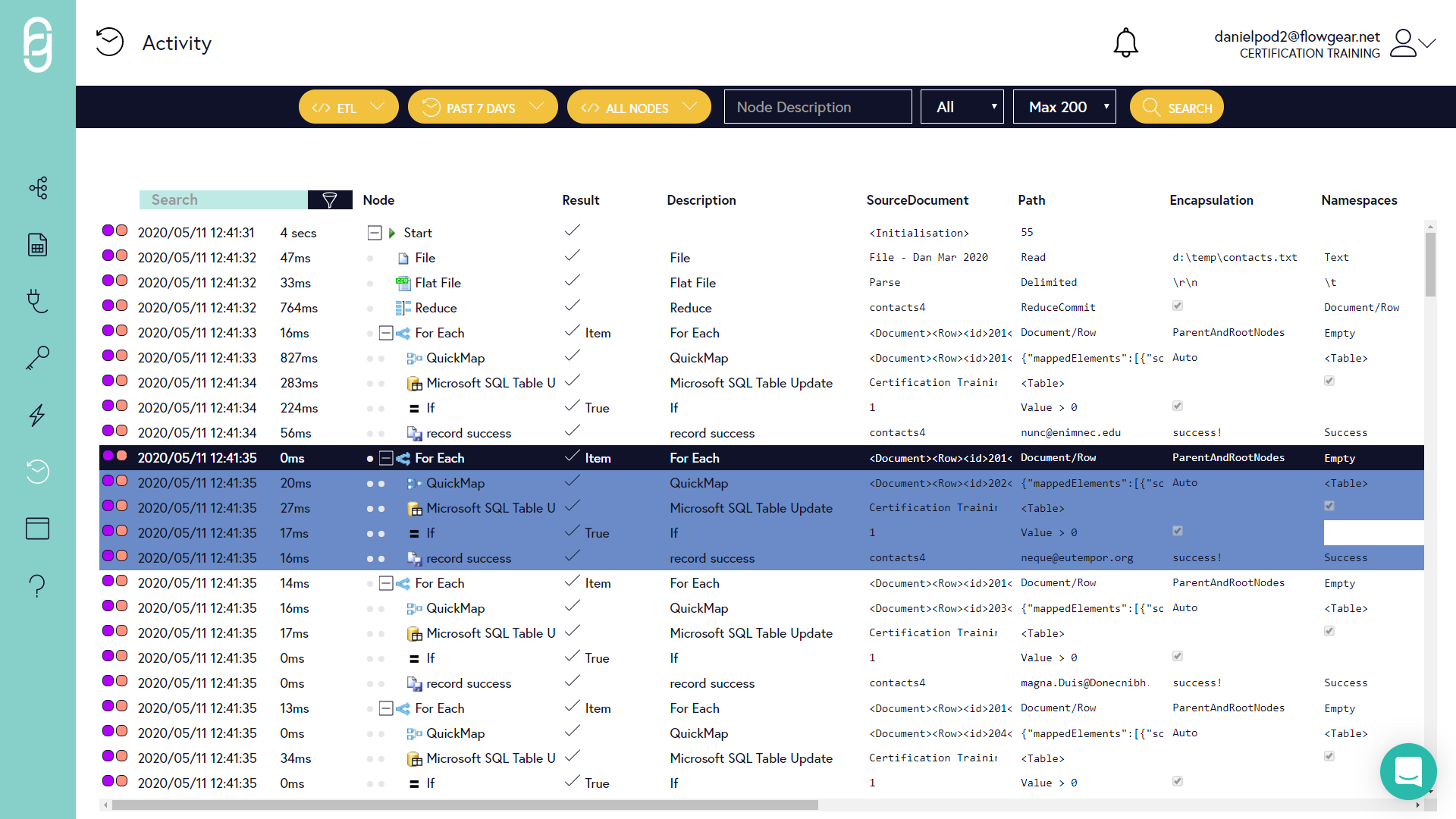

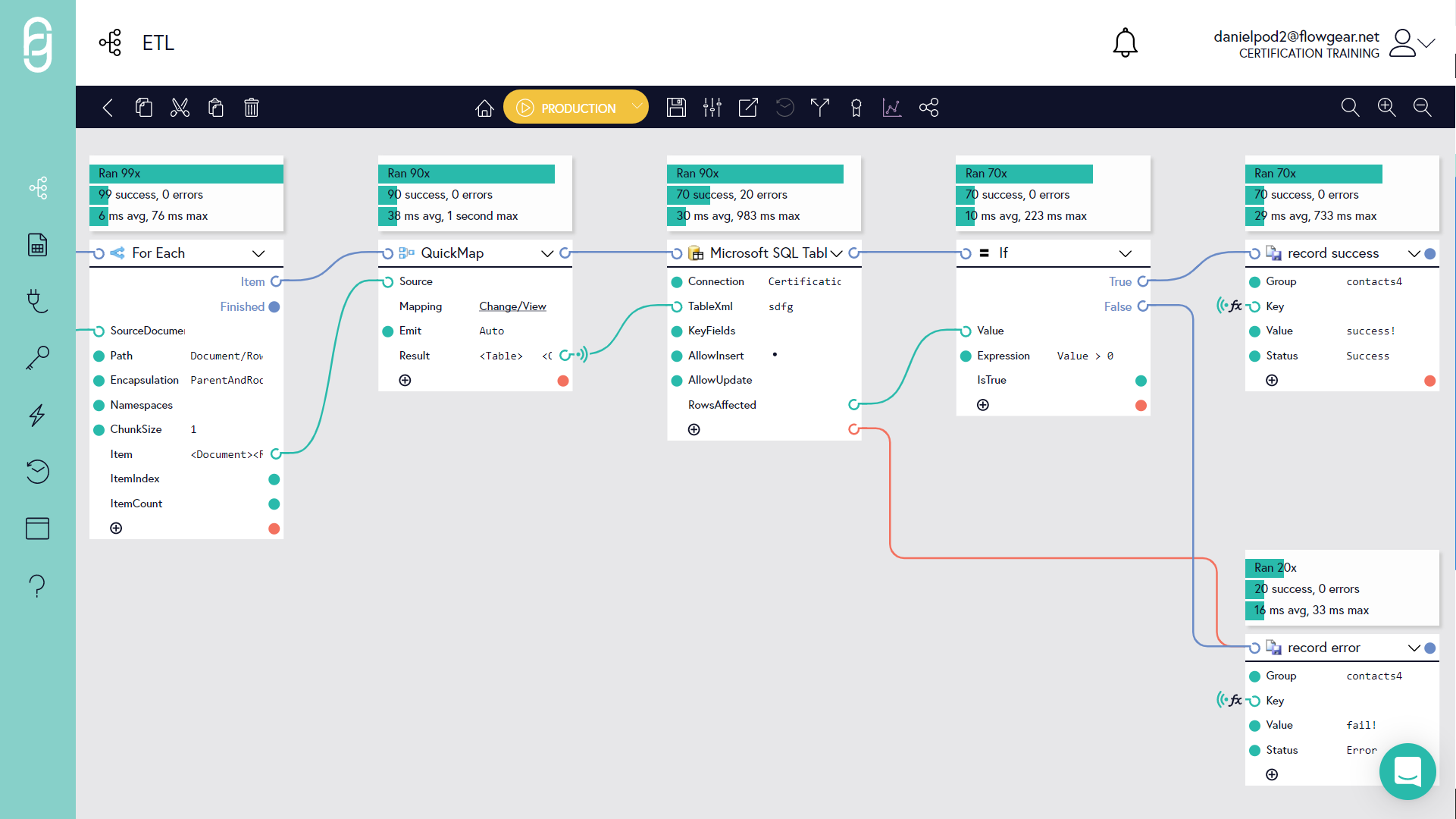

Extract, Transform and Load (ETL) Pattern

Most integration workflows follow these basic steps:

- Extract data from source app or service

- Transform the data into the shape required by the target system

- Load the data into the destination app or service

How to build an Extract-Transform-Load (ETL) workflow in Flowgear

This article outlines the overall pattern and individual steps you would use to create a production-ready ETL integration.

This pattern is suitable for both master and transactional data loading and can work in batch or near-realtime modes (depending on the Trigger you’ve chosen to use).

Best practice tips

- When implementing the Workflow, constrain the data source so that you’re only dealing with a few source records. This will make it easier to find the Activity Logs you’re interested in and ensures you don’t waste time waiting for the Workflow to complete processing a large amount of data each time you test

- Detecing and handling errors is very important. Include bad records in your source data so you can confirm the Workflow handles them correctly. If you don’t have access to the data source, you can manually modify the data in the Extract Node result Property and then start Workflow execution from the next Node. This causes the extract to be skipped and ensures that your manually prepared data is used as the input to the next stage

- There may be multiple types of failures that can occur. Complete failures (e.g. due to a bad connection or credentials) should be handled with standard Workflow Error Handling techniques. Bad data errors should be handled by reading the response from the Load Node as shown in this article. It may also be necessary to capture multiple XPaths depending on the class of error.

- Flowgear Statistics can’t be deleted so while you’re implementing, set a temporary group name that won’t interfere with production datasets

- You can easily reset the Reducer to simulate fresh data (set the Reset property on the Reducer to do this)

Revision Management

Enables developers to review the complete history of the changes to a workflow over time, providing information about which team members were responsible for a contribution, and rollback or forking of a Workflow. See help article

Although all prior Workflow revisions are retained in Flowgear, it is not possible to review historical revisions of Workflows unless your plan includes Revision Management. Refer to Flowgear Pricing to see which subscriptions support this capability.

Release Management

Allows separate revisions of Workflows to be simultaneously used in different Environments (e.g. Test or Production Environments)

Enabling Release Management

Release Management is disabled by default. To enable it, set the Release Management option in the Site Detail Pane to Enabled. Read more

Availability

Release Management is not available on all subscriptions – refer to Flowgear Pricing to see which subscriptions support this capability.

Enhancements to integrations can be built and tested without disrupting Production use.

Dependency Insights

Povides information about how workflows, connections and DropPoints interact with each other, with a visual depiction of the relationship between them. See help article

Note that Dependency Insights is not available on all subscriptions – refer to Flowgear Pricing to see which subscriptions support this capability.

Exposes business and technical dependencies so impact scope can be evaluated.

Should you build or buy a software integration solution?

By using the Flowgear integration platform, businesses can focus on the systems that are critical for internal and customer success and leave all the complex integration and automation issues to us!

Debugging and developer insights

Exposes business and technical dependencies so impact scope can be evaluated.

Activity Logs

Workflow Activity Logs provide a granular record of each execution step of a Workflow by recording the state of a connector or Workflow Step before and after it executes.

It is important that all activity of users and the integrations themselves is recorded, not only for accountability, but also to be able to diagnose failure causes.

A record is retained of all users involved in workflow design while each historical revision of a workflow is also retained as it is developed. This record includes the stages at which it was promoted into a different profile slot.

Webhooks

Use workflows to efficiently apply webhooks.

Webhooks (also called a web callback or HTTP push API) are a useful and a light-weight solution to implement event triggers.

Webhooks are basically user defined HTTP callbacks (or small code snippets linked to a web application) which are triggered by specific events. Whenever a trigger event occurs in the source site, the webhook sees the event, collects the data, and sends it to the URL specified by you in the form of an HTTP request. You can even configure an event in one site to trigger an action in another site.

Webhooks are essentially the reverse of APIs: Where APIs call data on a request basis, webhooks receive data based on events.

Technical benefits

- Get real-time notifications

- Avoids the need for constant polling of the server-side application by the client-side application

- Saves time by automating data collection or update process

Webhooks deliver data to other applications in real-time, making them more efficient for both provider and consumer.

Comma-separated values (CSV)

Read or create CSV files

While every organization has its own approach to exchanging files, a common file format that many accept for standardizing data integration between business applications is CSV.

Flowgear allows you to read CSV files or create CSV files from other sources, such as XML or JSON. You can build powerful data transformations that take data from CSV files and import it into business systems, or integrate and connect data from other applications into a CSV file for analysis or reporting.

Automating the manipulation of CSV files is easy with Flowgear Workflows which can be run on-demand, scheduled at specific times or when a trigger is activated, such as the arrival of a CSV file in an email.

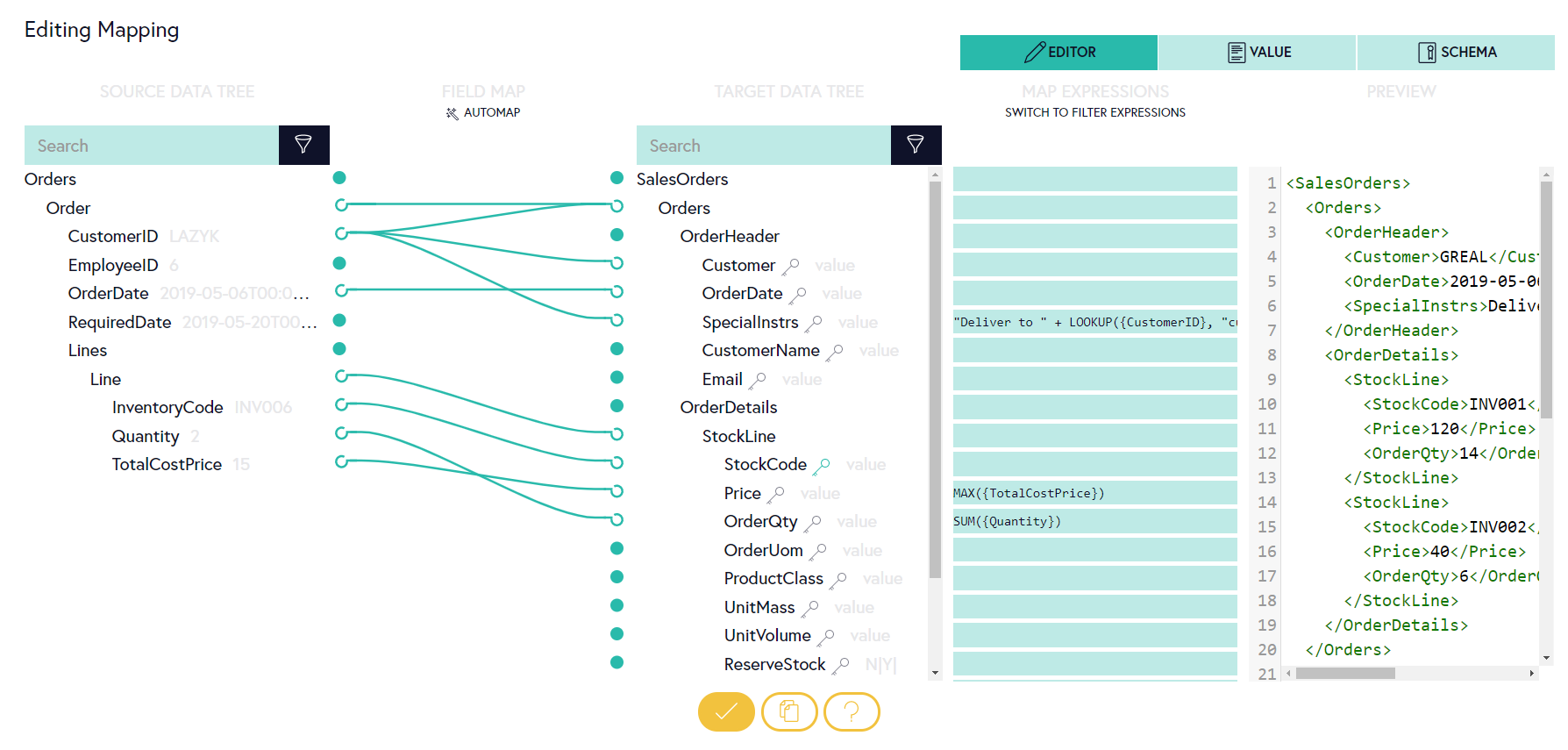

Using Flowgear’s QuickMap Node, you can visually map data from a CSV source file to a different destination file using a rich set of data manipulation and formatting functions.

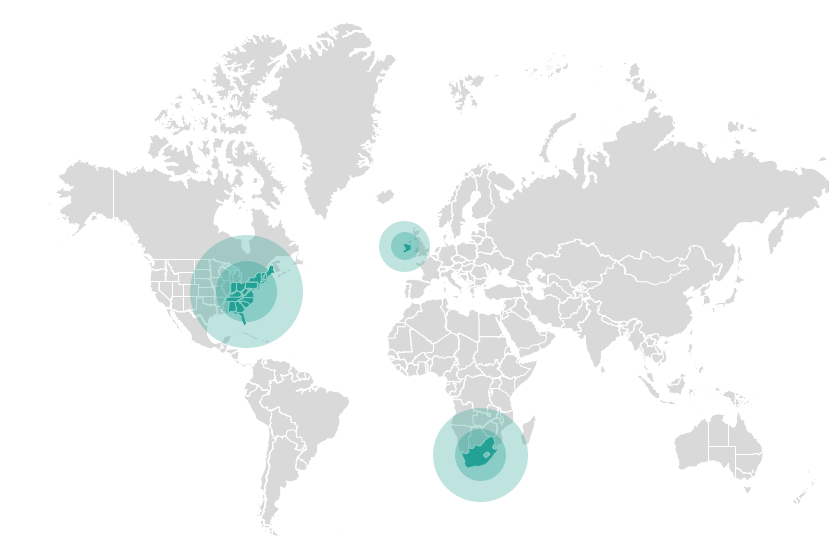

Infrastructure

Flowgear is delivered in three regions

- US – East US (Azure)

- Europe – North Europe (Azure)

- Africa – South Africa North (Azure)

The choice of multiple regions not only gives customers the ability to locate their service in the territory of their choosing but also reduces transactional bandwidth, cost, and implicitly latency.

What developers should consider

Modern Organisations depend heavily on an increasing array of apps, systems and services to operate efficiently and grow their businesses but sharing data between them is one of the biggest challenges facing IT today.

Hand-coded, point-to-point integrations are expensive to implement, non-sustainable and uneconomical to maintain, while the traditional Enterprise Service Bus-approach requires substantial up-front planning and results in an inflexible solution.

Flowgear’s internationally renowned platform enables organisations of all sizes to build powerful Application, Data and API integrations whether they’re in the Cloud or on-premise, all from a single interface.

By buying an integration solution rather than building, you as the developer enable greater interoperability between legacy systems and new digital technologies, and enable the organization to implement component-oriented solutions rather than monolithic ones.

The business IT landscape is changing and becoming more distributed, so you need to have an integration solution that is adaptable; hand coded systems usually are written for a limited scope of work and don’t lend themselves to quick reliable changes.

By investing in an integration solution you are driving business value by providing opportunities for the business to change more quickly.